The third #EvalTuesdayTip from our 6 week series on evaluator blind spots is on confirmation bias.

Evaluators (as well as all other members of the human race) suffer from confirmation bias. Confirmation bias is, in short, “the tendency to search for, interpret and recall information in a way that supports what we already believe.” Although unintentional, evaluators may end up interpreting data to confirm an existing hypothesis rather than allowing the data to lead the interpretation.

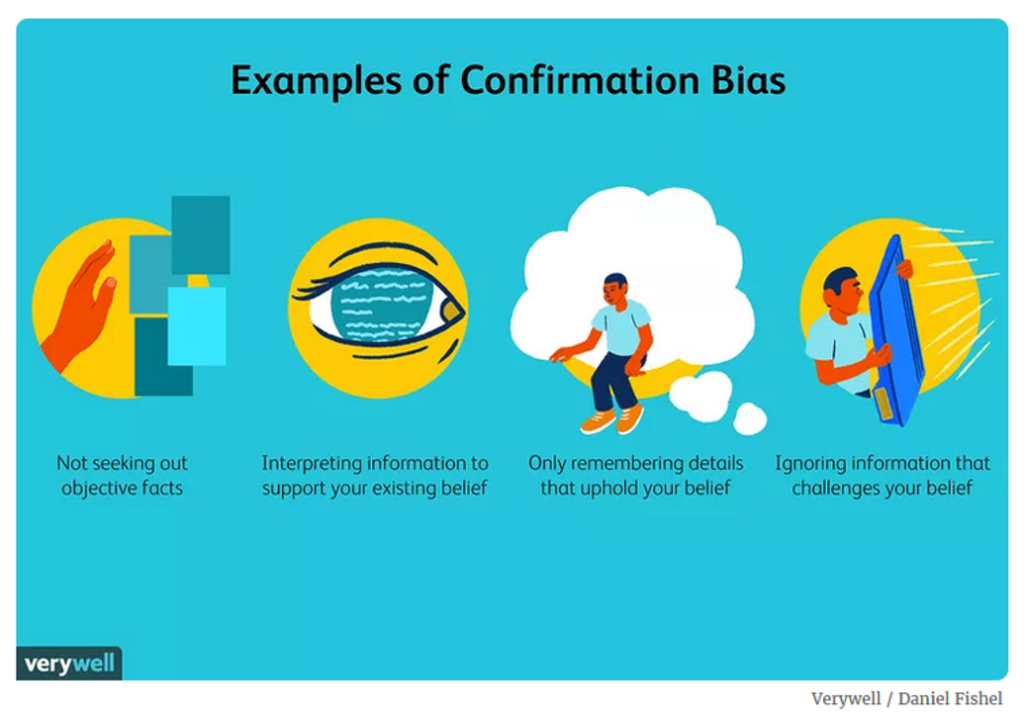

Some examples of confirmation bias include:

- Not seeking out objective data

- Interpreting data to support an existing hypothesis

- Only remembering data that upholds the existing hypothesis

- Ignoring data that contradicts the existing hypothesis

Fortunately, being aware that evaluators fall into the same confirmation bias as everyone else is the first step towards addressing this pervasive bias.

Other ways of addressing this bias are to:

- Widen the evaluation team to include independent analysts, delinked to other elements of the evaluation.

- Engage “double-blind” fieldworkers who do not know the organization that is being evaluated. This is used when conducting a qualitative impact evaluation using the Qualitative Impact Assessment Protocol (QuOP) created by Bath Social and Development Research.

- Create an appropriate distance between the evaluator and the interviewee (so that the evaluator is also aware of the interviewee’s confirmation bias).

- Require data collectors to validate their data (through photos, videos, documents, etc.) that allow evaluators to cross check their data.

- Ensure evaluation instruments are pretested by a variety of stakeholders and adapted based on their feedback.

- Ask each member of the team to think about their confirmation bias and openly discuss it.

- Have a “critical evaluator friend” review the draft evaluation looking for any evidence of confirmation bias.

For additional thoughts on confirmation bias, read this ODI blog “How cognitive biases affect monitoring, evaluation and learning – and what can be done about it”